Large Language Models Survey: Capabilities, Limitations & Key Insights

Table of Contents

- Introduction to the Large Language Models Survey

- Evolution of Language Models to Modern LLMs

- Scaling Laws and LLM Performance

- Prominent Large Language Model Families

- Emergent Capabilities of Large Language Models

- Chain-of-Thought and Reasoning Abilities

- Large Language Models in Healthcare and Finance

- LLM Foundations: Pre-training and Architecture

- Retrieval-Augmented Generation and Planning

- Limitations, Challenges, and Future Directions

📌 Key Takeaways

- Emergent abilities — LLMs exhibit capabilities beyond their training objectives, including in-context learning, instruction following, and step-by-step reasoning at sufficient scale.

- Scaling laws — Model performance follows predictable mathematical relationships with model size, dataset size, and compute, enabling accurate performance predictions.

- Chain-of-thought reasoning — CoT prompting dramatically improves LLM performance on complex tasks, with effectiveness increasing at 60B+ parameters.

- Domain specialization — LLMs adapted for healthcare, finance, and law outperform general models on specialized tasks through domain-specific pre-training and fine-tuning.

- Critical limitations persist — Hallucination, bias, context window constraints, and interpretability challenges remain key obstacles for reliable LLM deployment.

Introduction to the Large Language Models Survey

The rapid advancement of artificial intelligence has been fundamentally reshaped by the development of large language models (LLMs) built on the transformer architecture. This comprehensive large language models survey, authored by Matarazzo and Torlone (arXiv:2501.04040), provides a thorough examination of these models’ foundational components, scaling mechanisms, architectural strategies, and domain-specific applications. The survey addresses critical questions about how LLMs generalize across diverse tasks, exhibit planning and reasoning abilities, and whether emergent capabilities can be systematically enhanced.

Models like GPT-4, LLaMA, Claude, and Gemma now exhibit remarkable performance across a variety of language-related tasks — text generation, question answering, translation, and summarization — often rivaling human-like comprehension. More intriguingly, these large language models have demonstrated emergent abilities extending far beyond their core text prediction function, showing proficiency in commonsense reasoning, code generation, arithmetic operations, and complex multi-step problem solving.

This article distills the key findings from the survey, providing accessible insights into the current state of LLM research, the mechanisms driving their capabilities, and the inherent limitations that researchers and practitioners must navigate. Whether you’re a technology leader evaluating AI adoption or a researcher tracking the field, understanding the landscape mapped by this survey is essential for informed decision-making in the era of AI-driven transformation.

Evolution of Language Models to Modern LLMs

The survey traces the development of language models through four distinct evolutionary stages, each representing a fundamental shift in capability and approach. Understanding this trajectory is essential for appreciating how today’s large language models achieved their current level of sophistication.

The journey began with Statistical Language Models (SLMs), which captured word frequencies and co-occurrences using the Markov assumption — predicting word probability based only on the previous n words. While foundational, these n-gram models were limited by exponential parameter growth and inability to capture long-range dependencies in language.

The second stage introduced Neural Language Models, leveraging recurrent neural networks (RNNs) and long short-term memory (LSTM) networks to capture longer-range dependencies. The landmark word2vec model by Mikolov et al. represented a pivotal shift from word sequencing to learning distributed representations of words, enabling models to understand semantic relationships.

Pre-trained Language Models (PLMs) marked the third stage, with models like ELMo and BERT introducing the “pre-training and fine-tuning” paradigm. BERT’s bidirectional transformer architecture demonstrated that pre-trained models could achieve state-of-the-art performance across diverse NLP tasks with minimal task-specific fine-tuning. This paradigm shift fundamentally changed how the field approached language understanding.

The current era of Large Language Models emerged when researchers discovered that increasing model scale — from millions to billions and trillions of parameters — yielded not just quantitative improvements but qualitative leaps in capability. These emergent abilities, as defined by Wei et al., represent instances where “quantitative changes in a system result in qualitative changes in behavior.” This phase change at scale is what distinguishes modern LLMs from their predecessors and is a central theme of the survey.

Large Language Models Survey: Scaling Laws and Performance

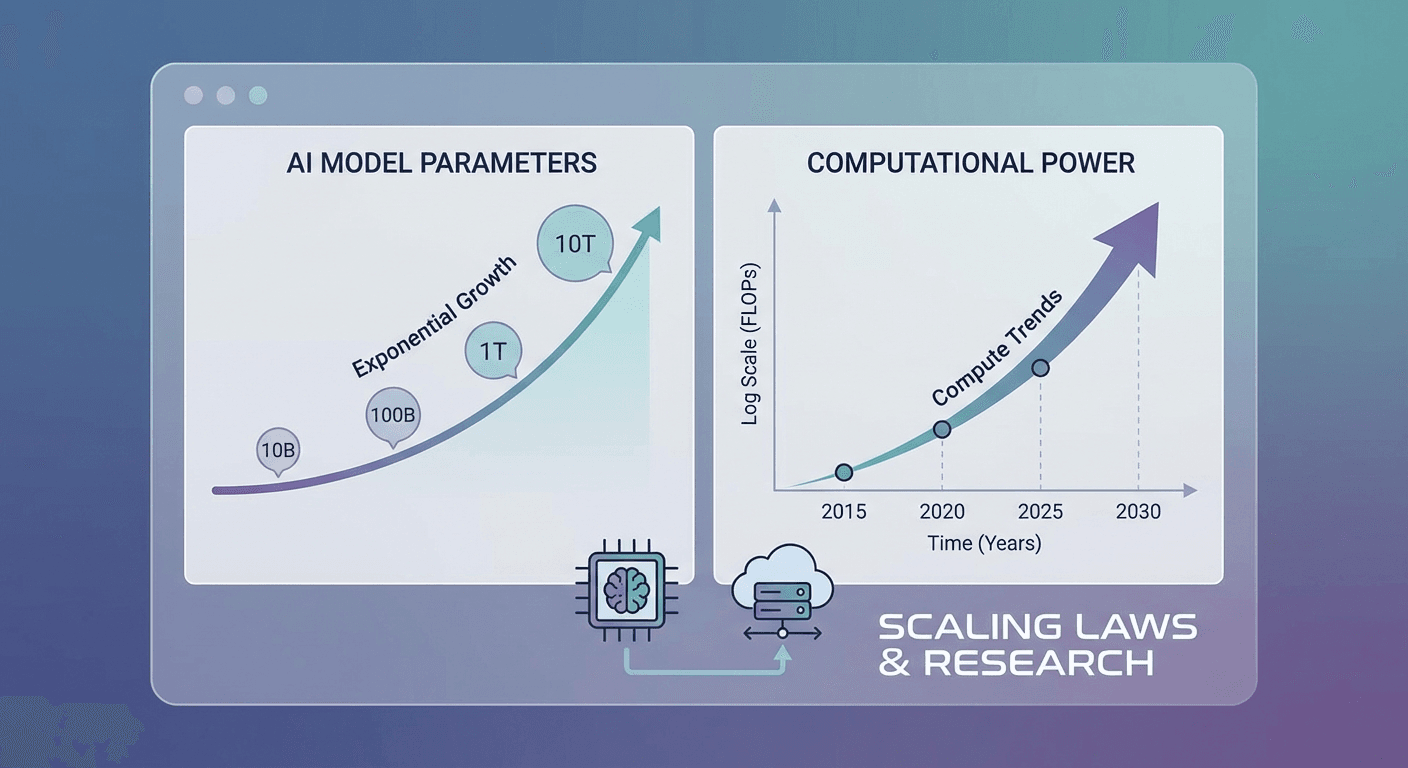

The scaling law is a fundamental principle underlying LLM development, and the survey provides detailed analysis of two representative frameworks that have guided the field’s resource allocation and model design decisions.

The KM scaling law, proposed by Kaplan et al. at OpenAI, establishes that model performance (measured by cross-entropy loss) scales as a power law with model size (N), dataset size (D), and compute (C). The mathematical relationships — L(N) = (Nc/N)^αN, L(D) = (Dc/D)^αD, L(C) = (Cc/C)^αC — were derived from experiments spanning model sizes from 768M to 1.5B parameters and data sizes from 22M to 23B tokens. This law revealed a robust interdependence among the three scaling factors.

The Chinchilla scaling law, proposed by Google DeepMind’s Hoffmann et al., offered an important correction. By experimenting with models from 70M to 16B parameters on 5B to 500B tokens, they showed that the KM law over-allocated compute budget to model size at the expense of data. The Chinchilla law argues that model size and data should be increased in roughly equal proportion, leading to the insight that many existing models were significantly undertrained relative to their parameter counts.

Perhaps the most fascinating aspect of scaling is the emergence of phase changes in model capabilities. While performance improves linearly as model size increases exponentially under the standard scaling law, certain abilities appear suddenly at specific scale thresholds — a phenomenon that has sparked significant debate. Schaeffer et al. argue that different metrics can reveal continuous improvement, challenging the concept of emergent abilities, while others maintain that the unpredictability of when and which abilities appear still supports emergence as a meaningful concept.

GPT-4’s development exemplified practical application of scaling laws, with OpenAI fitting the relationship L(C) = aC^b + c to predict the final model’s performance using runs with up to 10,000× less compute. This ability to accurately predict large model performance from small-scale experiments represents a major methodological advancement for the field.

Transform complex AI research papers into interactive experiences your audience will actually engage with.

Prominent Large Language Model Families

The survey provides comprehensive analysis of the major LLM families that have shaped the field, each contributing unique architectural innovations and demonstrating different approaches to scaling.

BERT (Google, 2018) introduced bidirectional context processing through its Masked Language Model (MLM) and Next Sentence Prediction (NSP) training objectives. While not large by current standards, BERT’s architecture set a new standard and inspired numerous derivatives including RoBERTa and ALBERT.

The GPT Series (OpenAI) represents the most prominent lineage in the LLM landscape. GPT-1 (110M parameters) introduced the transformer-based generative pre-training approach. GPT-2 (1.5B) demonstrated unprecedented text generation. GPT-3 (175B) introduced in-context learning, enabling few-shot and zero-shot task performance. GPT-4 expanded to multimodal capabilities, accepting both text and image inputs. The latest OpenAI o1 model represents a paradigm shift, using reinforcement learning to develop extended chain-of-thought reasoning, achieving record scores on mathematical olympiad qualifiers and competitive programming contests.

LLaMA (Meta AI) demonstrated that smaller, efficiently trained models can compete with much larger ones. LLaMA 13B outperformed GPT-3 (175B) on most benchmarks, while the 65B version competed with PaLM-540B despite being 10× smaller. The series introduced key architectural innovations including pre-normalization with RMSNorm, SwiGLU activation functions, and Rotary Positional Embeddings (RoPE). LLaMA 3 scaled to 405B parameters trained on 15 trillion tokens — a 15× increase from the original’s 1T tokens.

Claude (Anthropic) and Gemma (Google) round out the survey’s coverage. Claude 3.5 Sonnet excels in logical reasoning, mathematical problem-solving, and ethical reasoning, reflecting Anthropic’s focus on AI safety and alignment. Gemma, available in 2B and 7B configurations, prioritizes deployment efficiency, running on common developer hardware without specialized AI accelerators.

Emergent Capabilities of Large Language Models

The large language models survey identifies three primary emergent capabilities that distinguish modern LLMs from their predecessors, each representing a qualitative shift in what these systems can accomplish.

In-context learning (ICL), first formally observed in GPT-3, allows models to generate expected outputs for new tasks simply by being shown a few examples within the prompt — without any gradient updates or additional training. The survey notes a fundamental tension: “there’s seemingly a mismatch between pretraining (what it’s trained to do, which is next token prediction) and in-context learning (what we’re asking it to do).” Research by Dai et al. suggests that ICL implicitly performs meta-optimization through the attention mechanism, offering a partial explanation for this surprising capability.

Instruction following emerges through instruction tuning, where models fine-tuned on diverse multitask datasets with natural language descriptions can interpret and execute instructions for entirely unseen tasks. The survey cites experiments showing that LaMDA-PT begins to significantly outperform its untuned counterpart only at 68 billion parameters, while performance gains aren’t observed below 8 billion parameters — demonstrating the critical relationship between scale and emergent capability.

Step-by-step reasoning through chain-of-thought (CoT) prompting enables LLMs to solve complex multi-step problems that smaller models find impossible. Wei et al. demonstrated that CoT performance gains become pronounced at model sizes exceeding 100B parameters, with effectiveness varying across tasks. The survey provides empirical evidence suggesting that the presence of code in pre-training data may contribute to the emergence of these reasoning abilities — a hypothesis tested through experiments on LLaMA family models using the GSM8k dataset.

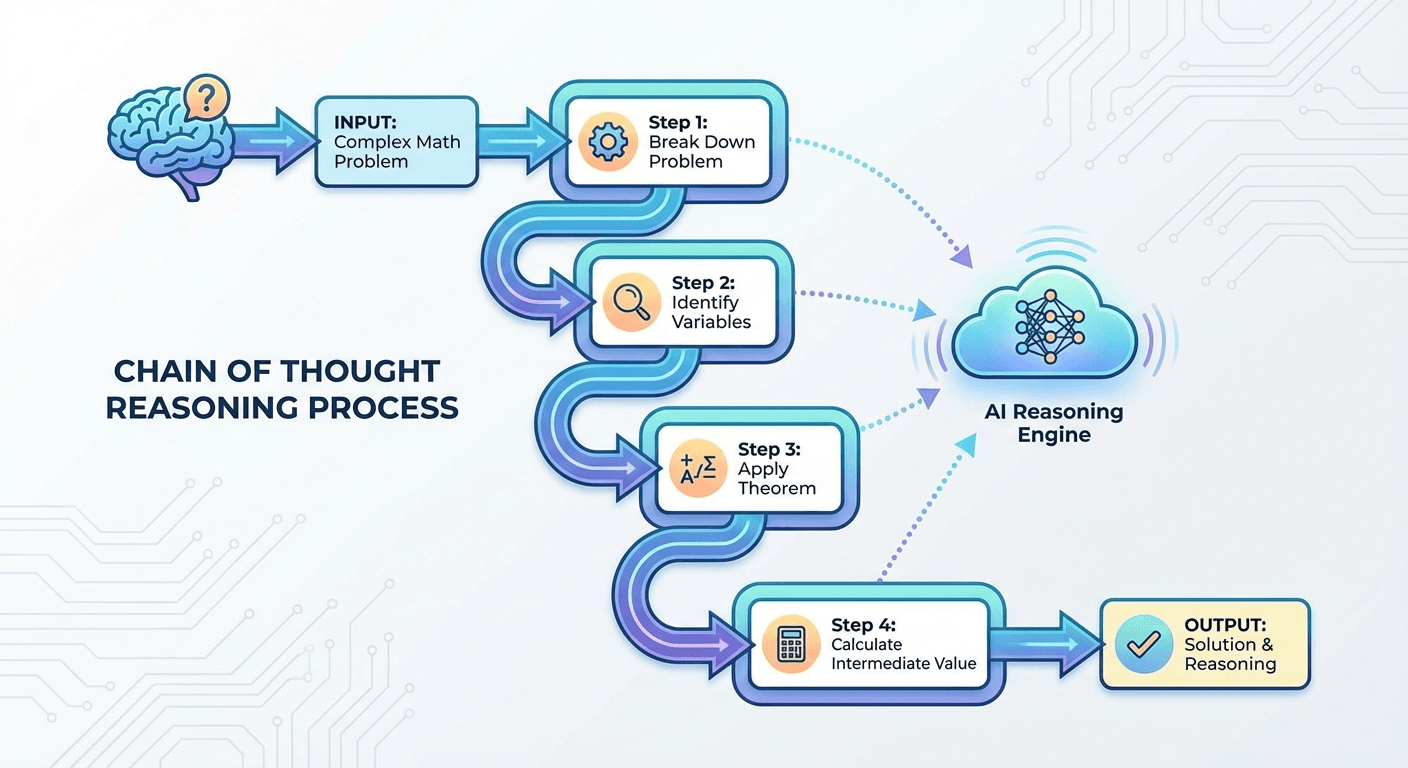

Chain-of-Thought and Large Language Models Reasoning

The survey devotes significant attention to chain-of-thought (CoT) prompting and its variants, which represent one of the most impactful techniques for enhancing LLM performance on complex reasoning tasks.

Standard CoT prompting involves integrating intermediate reasoning steps within the prompt, guiding the model to adopt a structured thought process. This approach is particularly beneficial for tasks requiring logical deduction, mathematical calculations, and multi-step problem solving. The survey explores several CoT variants and extensions that have emerged from the research community.

Program-of-Thoughts (PoT) extends CoT by having models generate executable code rather than natural language reasoning steps. This approach is particularly effective for mathematical and computational tasks where precision is critical. The survey’s empirical testing on LLaMA models using GSM8k and gsm-hard datasets compared CoT and PoT approaches, finding that code-based reasoning can outperform natural language reasoning for certain problem types.

The LLM-modulo framework represents another advancement covered in the survey, integrating external systems (calculators, knowledge bases, code executors) to handle tasks where pure language-based reasoning falls short. This hybrid approach acknowledges that LLMs excel at planning and natural language understanding but may need external verification for tasks requiring precise computation or factual accuracy.

OpenAI’s o1 model represents the cutting edge of reasoning-focused LLMs. Trained with reinforcement learning to produce long internal chains of thought before responding, o1 scored in the 89th percentile on competitive programming (Codeforces), placed among the top 500 U.S. students on the AIME math competition, and exceeded PhD-level accuracy on physics, biology, and chemistry benchmarks. The model’s successor, o3, achieved a record 87.5% on the Abstraction and Reasoning Corpus (ARC), approaching human-level performance of 84%.

Make AI research accessible — transform dense technical papers into interactive experiences with Libertify.

Large Language Models in Healthcare and Finance

The survey extensively covers domain-specific applications, demonstrating how large language models are being adapted to tackle specialized challenges across industries.

In healthcare, LLMs have been integrated across multiple applications: medical image analysis (correlating visual data with clinical reports), clinical decision support (parsing clinical notes and providing evidence-based recommendations), medical documentation (automating EHR creation), drug discovery (mining chemical libraries), personalized medicine, and patient engagement through intelligent virtual assistants. Med-PaLM, a derivative of Google’s PaLM model, achieved state-of-the-art accuracy on the MultiMedQA benchmark, with Flan-PaLM scoring 67.6% on MedQA compared to 50.3% for PubMedGPT and 44.4% for Galactica. Through instruction tuning, Med-PaLM significantly reduced the gap to clinician-level performance on several evaluation axes.

In finance, LLMs are being applied to algorithmic trading (analyzing unstructured data for market sentiment), risk management (interpreting regulatory documents), customer service automation, and fraud detection. The survey examines four major financial LLMs: BloombergGPT (50B parameters, pre-trained on 363B financial tokens), FinMA (based on LLaMA), InvestLM, and FinGPT. Adaptation techniques include domain-specific pre-training, continual pre-training, mixed-domain training, and custom tokenization for financial terminology. FLANG-ELECTRA achieved the best sentiment analysis results (92% F1), while FinMA-30B and GPT-4 achieved similar performance (87% F1) with 5-shot prompting.

The survey also highlights applications in education (personalized tutoring and content generation), law (legal document analysis and compliance checking), and scientific research (hypothesis generation and literature review). For organizations navigating AI regulation, understanding these domain-specific capabilities and limitations is critical for responsible deployment.

LLM Foundations: Pre-training, Architecture, and Adaptation

The survey provides deep technical coverage of the foundational building blocks that enable large language models to achieve their remarkable capabilities.

Pre-training strategies fall into three categories: unsupervised (learning from raw text without labels), supervised (learning from labeled datasets), and semi-supervised (combining both approaches). The choice of pre-training approach significantly impacts model performance and adaptability. Data sources range from general corpora (CommonCrawl, Wikipedia, BookCorpus) to specialized datasets (scientific literature, code repositories). Critical preprocessing steps — quality filtering, deduplication, privacy reduction, and tokenization — play an outsized role in determining final model quality.

Model adaptation techniques bridge the gap between general pre-training and specific use cases. Instruction tuning fine-tunes models on diverse multitask datasets with natural language descriptions, while alignment tuning (exemplified by RLHF — Reinforcement Learning from Human Feedback) adjusts model behavior to match desired human values and preferences. Parameter-efficient methods like LoRA enable adaptation with minimal computational overhead.

The transformer architecture remains the dominant framework, with the survey detailing its components: encoder-decoder structures, causal decoders (used by GPT), prefix decoders, self-attention mechanisms, normalization methods (LayerNorm, RMSNorm), activation functions (GELU, SwiGLU), and positional embeddings (absolute, relative, RoPE). Emerging architectures like State Space Models (Mamba) are also covered, suggesting potential alternatives to the attention-based paradigm for specific use cases.

Memory-efficient adaptation techniques — including pruning, quantization, low-rank factorization, and knowledge distillation — are enabling the rise of Small Language Models (SLMs) like DistilBERT and Gemma, which bring LLM capabilities to edge devices and resource-constrained environments. This democratization of AI access represents a significant trend documented by the survey, connecting to broader discussions about efficient attention mechanisms.

Retrieval-Augmented Generation and LLM Planning

The survey examines advanced utilization strategies that extend LLM capabilities beyond pure text generation, with particular focus on Retrieval-Augmented Generation (RAG) and planning frameworks.

RAG addresses one of the most significant LLM limitations — the tendency to generate plausible but factually incorrect information (hallucination). By combining LLMs with external knowledge bases, RAG allows models to retrieve relevant information during generation, enhancing accuracy and credibility. The survey covers the full RAG pipeline, from retrieval mechanisms to integration strategies, making this one of the most practically relevant sections for enterprise AI deployment.

The planning capabilities of LLMs represent another frontier explored in the survey. Complex tasks are decomposed into manageable sub-tasks, with the model generating and executing plans of action. The survey distinguishes between text-based and programmatic planning approaches, highlighting the critical role of feedback and plan refinement mechanisms. Commonsense knowledge — the ability to reason about everyday scenarios and physical phenomena — underpins effective planning and remains an active area of research.

The LLM-modulo framework integrates external systems to handle tasks where pure language reasoning is insufficient. This architecture allows LLMs to delegate precise calculations to calculators, verify facts against knowledge bases, and execute generated code — combining the model’s natural language understanding with the reliability of specialized tools. This hybrid approach is increasingly seen as the practical path to reliable AI systems in production environments.

Transform your AI research documents into engaging interactive experiences — from PDF to interactive in minutes.

Limitations, Challenges, and Future Directions for LLMs

Despite their remarkable capabilities, the large language models survey is candid about the significant challenges that remain. Understanding these limitations is crucial for anyone deploying or relying on LLM technology.

Hallucination remains perhaps the most critical limitation — models can generate text that is fluent, coherent, and entirely wrong. This is particularly dangerous in high-stakes domains like healthcare and finance where factual accuracy is paramount. The survey notes that even GPT-4 “is not fully reliable” and “does not learn from experience,” highlighting that scale alone does not solve this fundamental challenge.

Bias and ethical concerns pervade LLM development. Training data sourced from the internet inevitably contains societal biases, which models can amplify. The Llama team, for instance, acknowledges the presence of biases and toxicity due to web data. Anthropic’s work on Constitutional AI and safety-focused training represents one approach to mitigation, but the survey emphasizes that this remains an open research problem.

Environmental and computational costs are substantial. Training models with billions of parameters requires enormous computational resources, raising concerns about sustainability and accessibility. The Chinchilla scaling law’s insight — that many models are undertrained relative to their size — suggests more efficient paths forward, and SLMs represent a parallel track toward democratizing access.

Looking forward, the survey identifies several key research directions: improving interpretability and transparency of model decisions, developing more robust evaluation frameworks for emergent abilities, advancing hybrid architectures (like LLM-modulo) that combine language understanding with reliable external tools, and creating domain-specific models that maintain safety while achieving expert-level performance. The convergence of these research threads will determine whether LLMs fulfill their transformative potential while maintaining the safety and reliability standards that critical applications demand. For more on the evolving AI governance landscape, explore our NIST AI Risk Management Framework analysis.

Frequently Asked Questions

What are the main capabilities of large language models?

Large language models demonstrate remarkable capabilities including text generation, question answering, translation, summarization, and emergent abilities like in-context learning, instruction following, and chain-of-thought reasoning. They can also perform code generation, commonsense reasoning, and arithmetic operations beyond their primary training objective.

What are the key limitations of large language models?

Key limitations include hallucination (generating plausible but incorrect information), data privacy concerns, environmental impact from training costs, inherent biases from training data, limited context windows, inability to learn from experience, and challenges with interpretability and transparency in decision-making.

How do scaling laws affect LLM performance?

Scaling laws show that as model size, dataset size, and computational resources increase, LLM performance improves predictably. The KM scaling law (Kaplan et al.) and Chinchilla scaling law (Hoffmann et al.) provide mathematical frameworks for predicting model performance based on parameters and training data, with emergent abilities appearing at certain scale thresholds.

What is chain-of-thought prompting in large language models?

Chain-of-thought (CoT) prompting is a technique that enhances LLM reasoning by including intermediate reasoning steps in the prompt. This guides the model through structured thought processes, particularly beneficial for arithmetic, logical reasoning, and multi-step problem-solving tasks. CoT effectiveness typically increases with model sizes exceeding 60-100 billion parameters.